Contents Overview

Introduction

Transparent VAPT services play a crucial role in strengthening organizational infrastructure security in this digital age. The present world has made vulnerability assessment and penetration testing an indispensable tool for protection against external threats. However, due to the increase in cyber threats, it is essential to have transparent VAPT services that maintain openness and trust.

Transparency among VAPT service providers ensures a relationship of trust between the clients and providers, which improves security and enhances the effectiveness of better decision-making processes and compliance.

Why Transparent VAPT Services Matter?

Transparency in transparent VAPT services means clients know what is being assessed, what tools are used, what findings are presented, and what remediation strategies are being followed.

Building Trust and Confidence: Building trust and confidence is the foundation of transparent VAPT services, ensuring clients fully understand how vulnerabilities are detected and mitigated. if the type of testing being conducted, which vulnerabilities are found, and what remediation will look like-all this kind of openness brings forward an association based on trust and integrity.

Better Decision-Making: In the light of detailed reports and insights from VAPT vendors, organizations can make better decisions. Knowing vulnerabilities and possible risks enables an organization to focus on security measures based on the most urgent threats.

Continuous Improvement in Security: An open mentality aids a collaborative work between the business and VAPT vendors in finding ways of ameliorating security strategies over time. This leads to constant improvement and a more robust cyber framework in the fight against threats.

Regulatory Compliance: Most industries have stringent data protection regulations. Clear VAPT services ensure that the business will be meeting industry standards with minimal legal consequences in case of any litigation.

How to Assess Transparency in VAPT Providers

How to test for transparency and openness while selecting a VAPT service provider?

Detailed Reporting: Comprehensive reporting is the hallmark of transparent VAPT services, ensuring actionable insights and remediation steps.

Here is a checklist of major criteria to check:

1. Clarity in Methodologies: A provider offering transparent VAPT services explains testing methodologies, tools, and techniques clearly. Behind-the-scenes knowledge helps clients understand better what to expect and if the approach has been effective.

2. Detailed Reporting: Comprehensive reporting is the hallmark of transparent VAPT services, ensuring actionable insights and remediation steps. Such a report should, therefore, be both concise and actionable in its detail so that the client knows exactly what to do next to enhance their security posture.

3. Clear Communication: Communication should be effective during the VAPT process. Providers should not have a single moment’s hesitation in responding to questions or clarifying the findings and recommending those findings. A responsive provider at the beginning of engagement would be a reflection of commitment toward transparency and teamwork concepts.

4. Client References and Case Studies: Client testimonials, case studies, or references are good sources of insight into a VAPT provider’s transparency. Positive feedback from other organizations suggest that the provider has managed to deliver clear, understandable, and actionable security assessments.

5. Follow Up and Support: Transparency does not end with a final report. A reliable VAPT service provider should show readiness in providing continued support to the businesses regarding the vulnerabilities identified during the assessment. They should be readily available for remediation, questions, and ensure solutions are effectively in place.

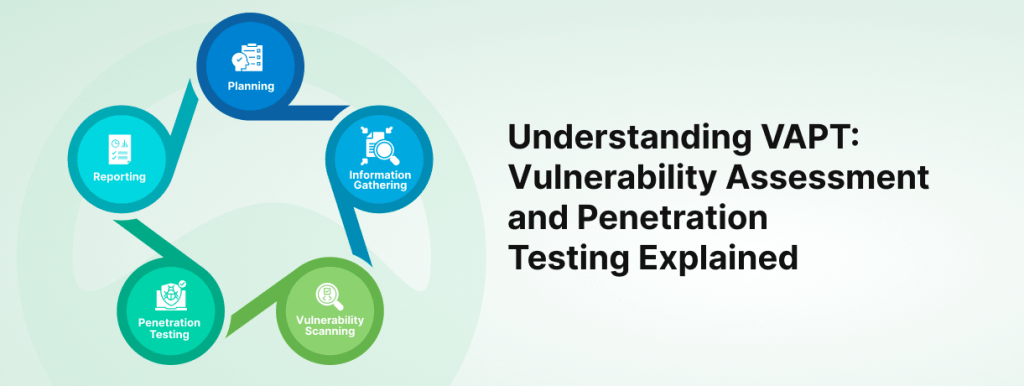

Steps toward a Transparent VAPT Process: Steps for Providers

Providers offering transparent VAPT services build secure, trustworthy relationships with clients.

Clear communication, ongoing collaboration, and post-assessment support make transparent VAPT services more effective and reliable for long-term cybersecurity resilience.

Initial Consultation and Needs Assessment: There ought to be an in-depth consultation by providers with regard to the specific needs of the client’s infrastructure. Tailoring services to ultimately align the aspect of alignment with organizational objectives and risks becomes essential.

Clear Tool and Techniques Communication: What tools have been used and what techniques have been applied in conducting the VAPT process needs to be clearly communicated to the client. Technological details concerning the design of vulnerability scanning and penetration testing should be explained to the client for their awareness every step of the way.

Ongoing Collaboration: An open provider is not closed to feedback and works collaboratively with the client when testing. Such continuous input builds a partnership atmosphere, and both work towards mutual security goals.

Post-Assessment Follow-Up: The report, in itself, should not only be presented post the testing phase but also act as a guidance for the client to help her devise the remediation process. Ongoing support, check-ins, and other additional services help implement change effectively for the client.

Benefits of Transparent VAPT Services for Businesses

Increased trust and accountability: A transparent service provider creates trust through self-accountability. The client is likely to have faith in a provider who allows it to understand their processes as well as findings.

Optimization resource allocation: With the detailed reports, combined with clear insights, businesses can make effective decisions about resource allocation on security issues. Knowing that some vulnerabilities are major and should be addressed, others minor, helps a company make effective prioritization decisions on fixes and minimize potential risks.

This helps businesses achieve a better security posture as they have full visibility of their vulnerabilities along with a clear path for remediation, thus enabling businesses to strengthen their cybersecurity framework. Since continuous improvement is such an activity that involves mechanisms in terms of security responses to emerging threats, there will be less paperwork and easier compliance, as depicted as follows –

Simplified Compliance: VAPT services are transparent by nature, making compliance easy for organizations who need to set industry-specific standards. Documentation of various vulnerabilities and remediation processes become well-documented and ready for audits and reviews from the concerned regulatory bodies.

Why Choose Codelynks for Transparent VAPT Services?

Codelynks is one place that genuinely believes transparency is the key to a long-lasting relationship. Be it vulnerability identification, remediation, or finalization, we are transparent about every step that goes into our VAPT services. Here’s what sets us apart:

Comprehensive Reports: We present clear, well-written reports showing vulnerabilities identified, related risks, and suggested remediation efforts.

Tailored Solutions: Every one of our services of VAPT is tailored according to your infrastructure and industry.

Expert Advisory: After the assessment, our cybersecurity experts work closely with you to ensure effective installation of such security measures implemented.

Regulatory Compliance: We support you in attaining the necessary industry regulations, and your business remains compliant.

Ongoing Support: We offer continuous follow-up assistance in helping you break through these complexities of vulnerability remediation and further security improvements.

To learn more about our transparent VAPT services, visit our website or get in touch with us today.

Conclusion

In the fast-paced world of cybersecurity, nothing can replace a transparent practice by engendering trust and rich defenses. When choosing the right VAPT provider that always focuses on open and transparent reporting, companies can always rest assured of informed decisions, compliance, and constant improvement of their cybersecurity strategies.

Codelynks is all set to guide the organization through transparent customized VAPT services that are all set to empower the business houses in maintaining security as well as be better prepared for emerging threats.

More Blogs: Setting Up Appium for iOS Automation on macOS: Beginner’s Guide